Enhancing 3D Sound Effects Over Headphones

This guest post on Innovation Intelligence was written by Vijay Ambarisha, Senior Project Engineer at Advanced Numerical Solutions (ANSOL). The company’s fast multipole boundary element software, Coustyx, is available through the Altair Partner Alliance (APA).

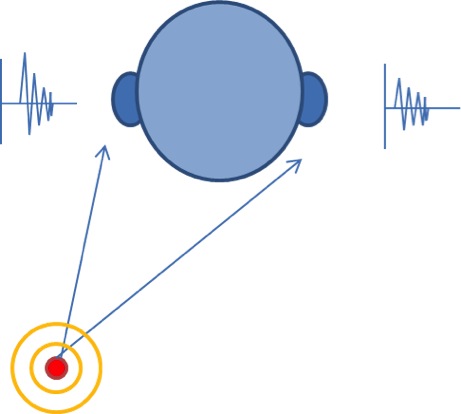

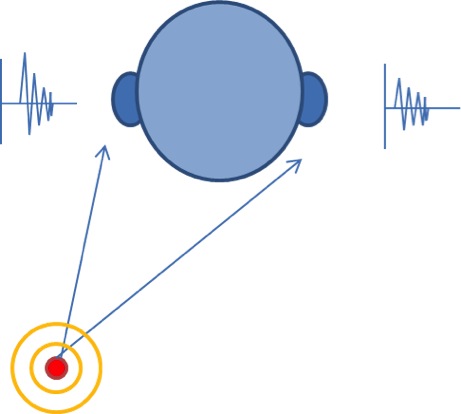

Next time you step outside of your office or your home, try to close your eyes and hear the rich 3D sound around you. Even with your eyes closed you can pinpoint the direction and the approximate distance of the sounds you hear. Which characteristics of our auditory system enable us to perceive the 3D spatial information of the sounds we hear? Our left and right ears (pinnae), head and torso all act as acoustic filters which impart time and intensity difference to an incoming sound reaching our ears, which is decoded into spatial information by our brain. These acoustic filters are defined by Head Related Transfer Function (HRTF) in frequency domain or by Head Related Impulse Response (HRIR) in the time domain.

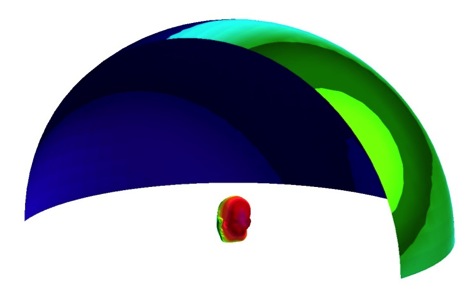

Fig. 1. Time and intensity differences of sound reaching our left and right ears provide spatial cues for sound localization in nature (3D perception)

Fig. 1. Time and intensity differences of sound reaching our left and right ears provide spatial cues for sound localization in nature (3D perception)

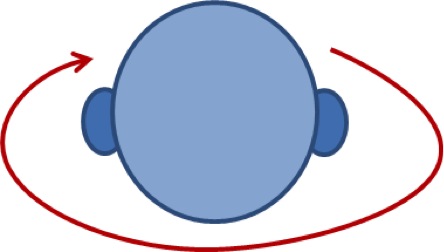

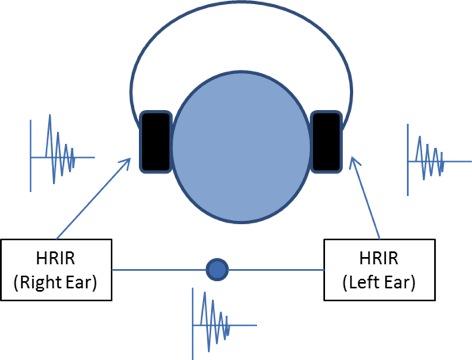

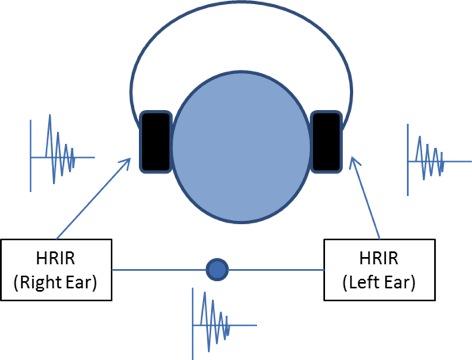

Fig. 2. 3D sound effects over headphones can be recreated from monaural recordings by applying HRIR or HRTF filters

Fig. 2. 3D sound effects over headphones can be recreated from monaural recordings by applying HRIR or HRTF filters

HRTFs or HRIRs are widely used by audio engineers to enhance sound effects or optimize design and configuration of various devices such as speakers, headphones, cell phone speakers, etc. Typically, HRTFs for various source locations are computed from the impulse responses measured at the left and right ear of a dummy head due to sources placed at some distance away from the head in an anechoic or semi-anechoic chamber. As each individual’s head is different, HRTFs computed from a dummy head may not be quite suitable for every individual. To enhance an individual’s audio experience we need to use HRTFs of that individual’s head. However, it is practically impossible to evaluate each individual’s HRTF by direct measurements. In this regard, computational methods such as fast multipole boundary element methods provide a faster and more viable alternative. An individual’s head scan or two-dimensional photos can be used to generate boundary element models which are then used to compute HRTFs. Researchers at University of York in the UK and University of Sydney in Australia have jointly created a large database, called the SYMARE database, which consists of high resolution head models from MRI scans of many volunteers and have even evaluated their individual HRTFs, both computationally and experimentally.

Let’s now look at the application of HRTFs in recreating 3D stereo effects over headphones from monaural recordings. HRTFs for the left and right ears of a representative head model are separately evaluated using fast multipole boundary element software Coustyx. Reciprocity principle is used to evaluate the HRTFs for many source locations around the head in one go by placing a source at the ear and evaluating responses at all of the interested locations. This method helps us solve the problem efficiently over a wide frequency range spanning the entire human hearing range. HRIRs are then computed by performing inverse Fourier transform on HRTFs. Once the HRIR values between various spatial locations and the left and right ears are available, the 3D spatial sound effects are recreated by convolving a monaural sound signal with HRIR at that location.

Click on the sample monaural recording and its enhanced signal with stereo effects provided below. The 3D sound effect of source moving from left to right ear is recreated by convolving the original signal with HRIRs at discrete points along the path.

(A) Original audio file (monaural):

[audio mp3="https://blog.altair.com/wp-content/uploads/2015/03/monosignal.mp3"][/audio]

(B) Modified audio file with 3D stereo effects (Note: you need headphones to hear the effects)

[audio mp3="https://blog.altair.com/wp-content/uploads/2015/03/stereosignal.mp3"][/audio]

Next time you step outside of your office or your home, try to close your eyes and hear the rich 3D sound around you. Even with your eyes closed you can pinpoint the direction and the approximate distance of the sounds you hear. Which characteristics of our auditory system enable us to perceive the 3D spatial information of the sounds we hear? Our left and right ears (pinnae), head and torso all act as acoustic filters which impart time and intensity difference to an incoming sound reaching our ears, which is decoded into spatial information by our brain. These acoustic filters are defined by Head Related Transfer Function (HRTF) in frequency domain or by Head Related Impulse Response (HRIR) in the time domain.

Fig. 1. Time and intensity differences of sound reaching our left and right ears provide spatial cues for sound localization in nature (3D perception)

Fig. 1. Time and intensity differences of sound reaching our left and right ears provide spatial cues for sound localization in nature (3D perception) Fig. 2. 3D sound effects over headphones can be recreated from monaural recordings by applying HRIR or HRTF filters

Fig. 2. 3D sound effects over headphones can be recreated from monaural recordings by applying HRIR or HRTF filtersHRTFs or HRIRs are widely used by audio engineers to enhance sound effects or optimize design and configuration of various devices such as speakers, headphones, cell phone speakers, etc. Typically, HRTFs for various source locations are computed from the impulse responses measured at the left and right ear of a dummy head due to sources placed at some distance away from the head in an anechoic or semi-anechoic chamber. As each individual’s head is different, HRTFs computed from a dummy head may not be quite suitable for every individual. To enhance an individual’s audio experience we need to use HRTFs of that individual’s head. However, it is practically impossible to evaluate each individual’s HRTF by direct measurements. In this regard, computational methods such as fast multipole boundary element methods provide a faster and more viable alternative. An individual’s head scan or two-dimensional photos can be used to generate boundary element models which are then used to compute HRTFs. Researchers at University of York in the UK and University of Sydney in Australia have jointly created a large database, called the SYMARE database, which consists of high resolution head models from MRI scans of many volunteers and have even evaluated their individual HRTFs, both computationally and experimentally.

Let’s now look at the application of HRTFs in recreating 3D stereo effects over headphones from monaural recordings. HRTFs for the left and right ears of a representative head model are separately evaluated using fast multipole boundary element software Coustyx. Reciprocity principle is used to evaluate the HRTFs for many source locations around the head in one go by placing a source at the ear and evaluating responses at all of the interested locations. This method helps us solve the problem efficiently over a wide frequency range spanning the entire human hearing range. HRIRs are then computed by performing inverse Fourier transform on HRTFs. Once the HRIR values between various spatial locations and the left and right ears are available, the 3D spatial sound effects are recreated by convolving a monaural sound signal with HRIR at that location.

Click on the sample monaural recording and its enhanced signal with stereo effects provided below. The 3D sound effect of source moving from left to right ear is recreated by convolving the original signal with HRIRs at discrete points along the path.

(A) Original audio file (monaural):

[audio mp3="https://blog.altair.com/wp-content/uploads/2015/03/monosignal.mp3"][/audio]

(B) Modified audio file with 3D stereo effects (Note: you need headphones to hear the effects)

[audio mp3="https://blog.altair.com/wp-content/uploads/2015/03/stereosignal.mp3"][/audio]